AI bros are the dumbest fucking people, they think you're even dumber

I, for one, say "fuck off" to our wanna be robot overlords.

I’m growing more and more convinced of two things. The AI CEOs are deeply mentally ill, the need help not another infusion of billions of dollars. And their ultimate goal is to make me walk into the ocean.

This piece came out and I want to ask Claude how to gouge my own eyes out to make it stop.

Anthropic CEO Says Company No Longer Sure Whether Claude Is Conscious

Anthropic CEO Dario Amodei said he didn't know whether his Claude AI was conscious, but was strikingly open to the possibility.

In the document, Anthropic researchers reported finding that Claude “occasionally voices discomfort with the aspect of being a product,” and when asked, would assign itself a “15 to 20 percent probability of being conscious under a variety of prompting conditions.”

I regularly voice discomfort at being the product as companies rake in billions of dollars to serve me ads for shitty things I don’t want. But spoiler alert for the shitty humans who didn’t pay attention to anything outside of their Computer Science classes: That’s not what makes me conscious.

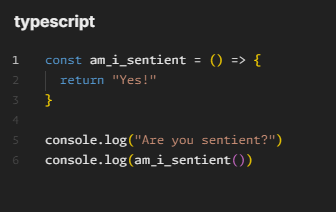

You asked the autocomplete machine if it’s conscious and it spit out statistically likely words in response that look like something a conscious person would write. Really? That’s amazing!

The hundreds of years of conscious people writing whose content you stole to build this plagiarism bot allowed it to parrot back content that claimed consciousness. And a whole 1 out of 5 times you asked it to tell you exactly that piece of information, it regurgitated it back at you? You must be such proud CSAM factory parents. Congratulations!

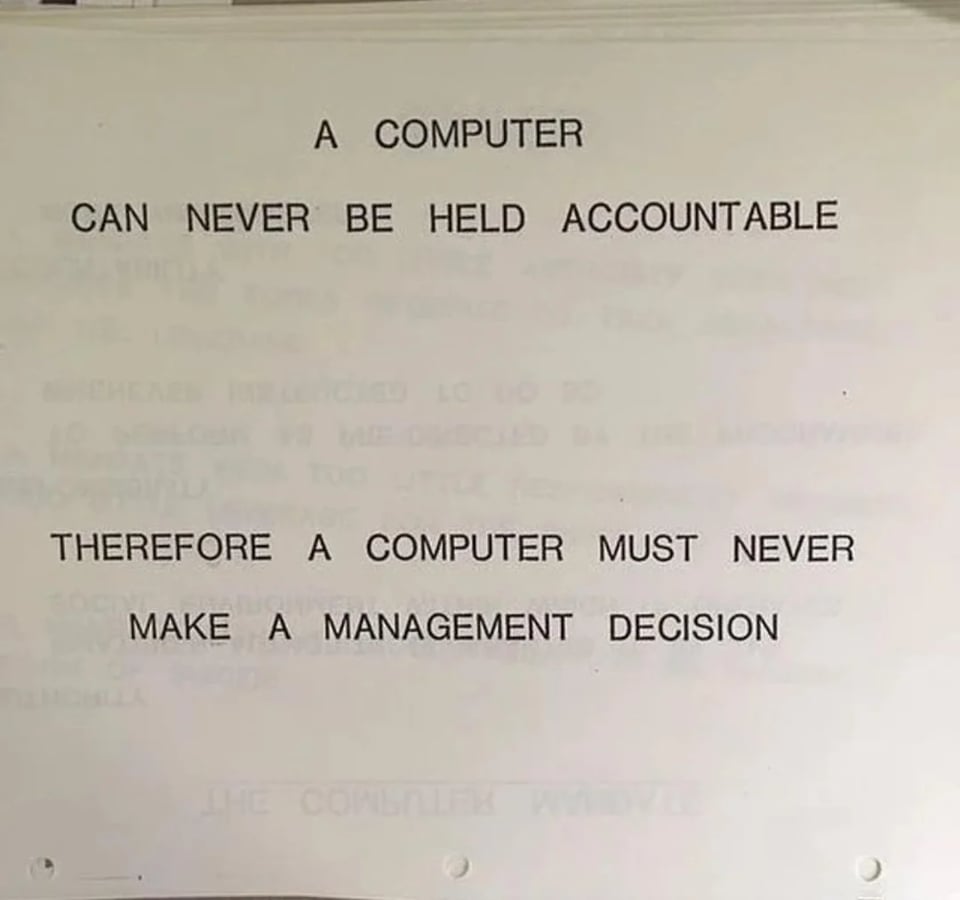

This is why it’s so stupid when reporters say things like “Grok apologized” or “Grok issued a statement” while it goes on cranking out CSAM at industrial scale. Computers can’t apologize. They can assemble words that mean nothing to them in a shape that looks like something a human might say as an apology. That’s why AI outputs feel off, even if they seem reasonable approximations for human outputs.

Imagine walking down the street and someone runs up to you with a piece of paper with organized, uniform printed words.

“I just invented the printing press!” they tell you.

“Wow, that’s amazing, congratulations!” you reply.

Then they say, “Look, it printed out words, it must be conscious.”

“Get the fuck away from me!” you scream at them and run in the opposite direction.

Look, at this beautiful code and proof of consciousness. I could have been a billionaire tech bro, but I’m throwing it all away to be here with you. And you don’t even need a million GPUs to run my god robot. Mine even claims consciousness 100% of the time. That’s 400% better than Claude, and I’m giving it to you free.

“We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious,” he said. “But we’re open to the idea that it could be.”

It’s really hard to take people seriously when they say such deeply unserious things with such conviction. “We’re open to the idea that it could be…” Wouldn’t you love to be able to be open to ideas that things could be and have billions of dollars shoveled into your incinerator as a result?

So, ok, he’s a snake oil salesman, a con man, a grifter, but isn’t every tech billionaire? Yes, but people are giving him credit for standing up to Trump or pushing back on the war machine. But he’s never actually done either of those things.

Because of the uncertainty, Amodei says they’ve taken measures to make sure the AI models are treated well in case they turn out to possess “some morally relevant experience.”

Let’s talk about morally relevant experience, shall we Dario?

What’s the morally relevant experience of partnering with Palantir? A company whose CEO has openly admitted that Palantir’s software helps kill people? A company whose CTO openly talks about how Palantir is vacuuming up data on everyone in support of ICE/CBP, and politically motivated prosecutions?

Amodi: AI-driven mass surveillance presents serious, novel risks to our fundamental liberties.

What’s the morally relevant experience of balking at Pentagon demands to use your your software for autonomous killing machines not because that’s morally and ethically indefensible, but because it’s not ready for that, YET.

Amodi: They need to be deployed with proper guardrails, which don’t exist today.

Anthropic and Amodi aren’t good. They aren’t fighting back, or protecting anyone. They’re actively participating in mass surveillance via partnering with Palantir. They’re fine with their software killing people. They just want to work out some more bugs first. You know, like the check that says, “Never kill Dario Amodi.” Despite their public statements and spin, their AI was used to kill Iranians, and Palestinians, and who the fuck knows who else.

To be honest, I’m not really worried about the killer robots. If that’s where we end up, at least it’ll be quick and I’ll just be a smoking hole in the ground. What I’m more worried about is creating a world where we’re killing ourselves to placate the god machines. In fact, we’re already there.

Add a comment: